FOREIGN extremists are using artificial intelligence and social media manipulation to fuel anti-Muslim hatred in Britain, with online incitement rapidly translating into real-world attacks on mosques and communities, new research revealed on Tuesday (10).

The Tell MAMA report warned how contemporary far-right extremism is “no longer confined to domestic networks or isolated online ecosystems, but is increasingly shaped by transnational actors, foreign influence, and rapidly advancing digital technologies”.

Titled The Risk of Foreign Influence on the UK Far-Right and Anti-Muslim Hate, the report found out that AI was used to generate racist imagery and propaganda, falsely depicting Muslims as violent and glorifying attacks on police and public infrastructure.

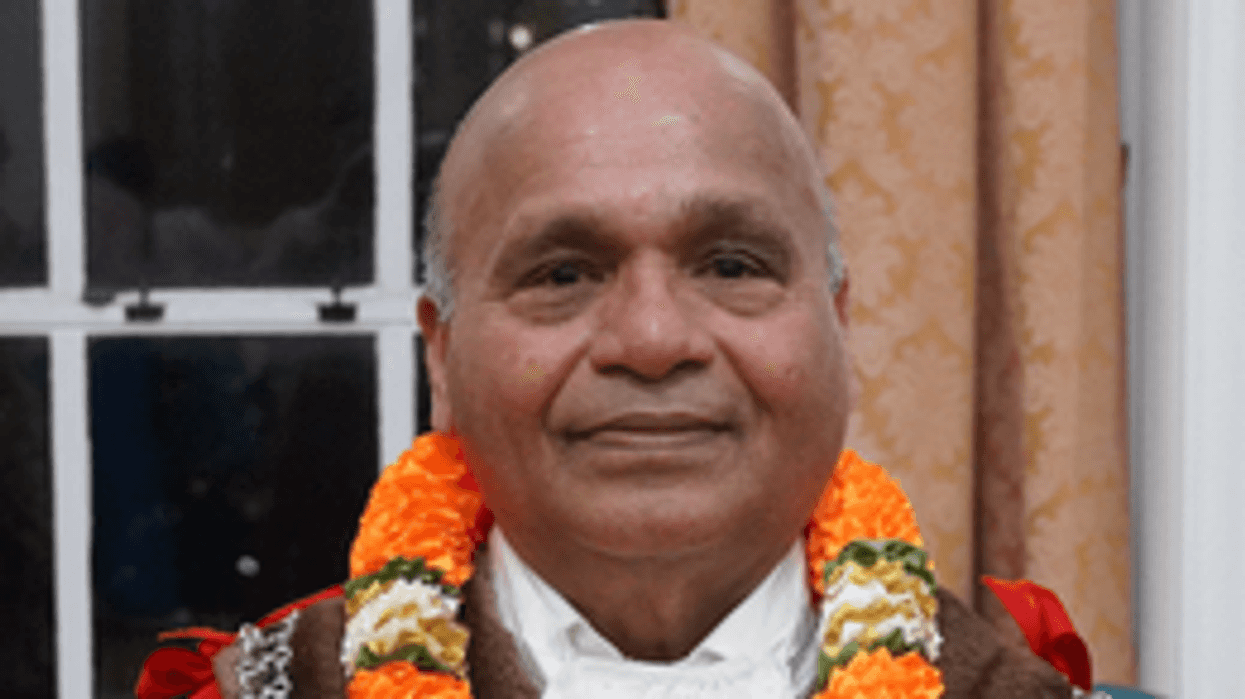

“Artificial intelligence is acting as a force multiplier for anti-Muslim hate, driven by international actors based in Russia,” said Iman Atta OBE, director of Tell MAMA, which measures and monitors anti-Muslim incidents across the country.

“It allows small extremist networks to appear larger, more credible and more threatening than they are.”

Researchers warned that current counter-terrorism frameworks, which “remain largely structured around domestic threat models”, cannot adequately respond to threats that are now “cross-platform, cross-border, and technologically fused”.

The report called for stronger regulation of AI-generated extremist content, recognition of transnational online extremism in UK counter-terrorism strategy, and increased protection and support for Muslim communities and places of worship.

Tell MAMA urged policymakers to “do more to address the risks of racists and extremists using AI to spread fear, encourage violence and recruit”, alongside steps to improve social media literacy skills in view of the rise in online disinformation targeting minority communities.

The latest study centres on a far-right network called “Direct Action” that operated between September 2024 and early 2025, targeting British Muslim communities. The network offered £100 in cryptocurrency to anyone who vandalised mosques with racist graffiti, successfully coordinating attacks on mosques, community centres and a primary school in London and Manchester between January and February 2025.

According to the study, social media platforms were exploited, using purchased dormant accounts, bots, and hybrid “cyborg” accounts to create a false sense of domestic support. Researchers identified X (formerly Twitter) accounts created years earlier that suddenly became active after the Southport stabbings in July 2024, spamming identical messages encouraging violence.

While the true origins of the network remain unclear, investigators said they found “compelling” evidence of foreign involvement. The group’s main logo was copied direct from a defunct Russian hacktivist Telegram channel called “The Youth of the Saboteur”.

Messages contained tell-tale signs of non-native English speakers, including the pound symbol written backwards (2.500£ instead of £2,500), cities misspelt as “Glassgow” instead of Glasgow, and repetitive phrases like “discontented with politics and migrants” – phrasing more common in automated translation.

The network also used protest footage from Athens, Greece, filmed during 2011 anti-austerity demonstrations, falsely presenting it as UK unrest to encourage violence against police.

Researchers also identified AI-generated images depicting Muslims as terrorists, fake footage of burning police cars with misspelt text, and synthesised voices encouraging violence.

Videos combined real footage from the far-right riots following the Southport tragedy with AI-generated content, falsely claiming the attacker was Muslim and using the sentiment to recruit supporters.

“Behind every online incitement post, propaganda video, and encrypted message thread lies a real-world target: a mosque, a community centre, a family, or an individual,” the report said. “The transition from online incitement to physical vandalism, arson threats, and terror tactics is not theoretical; it is already occurring.”