by NADEEM BADSHAH

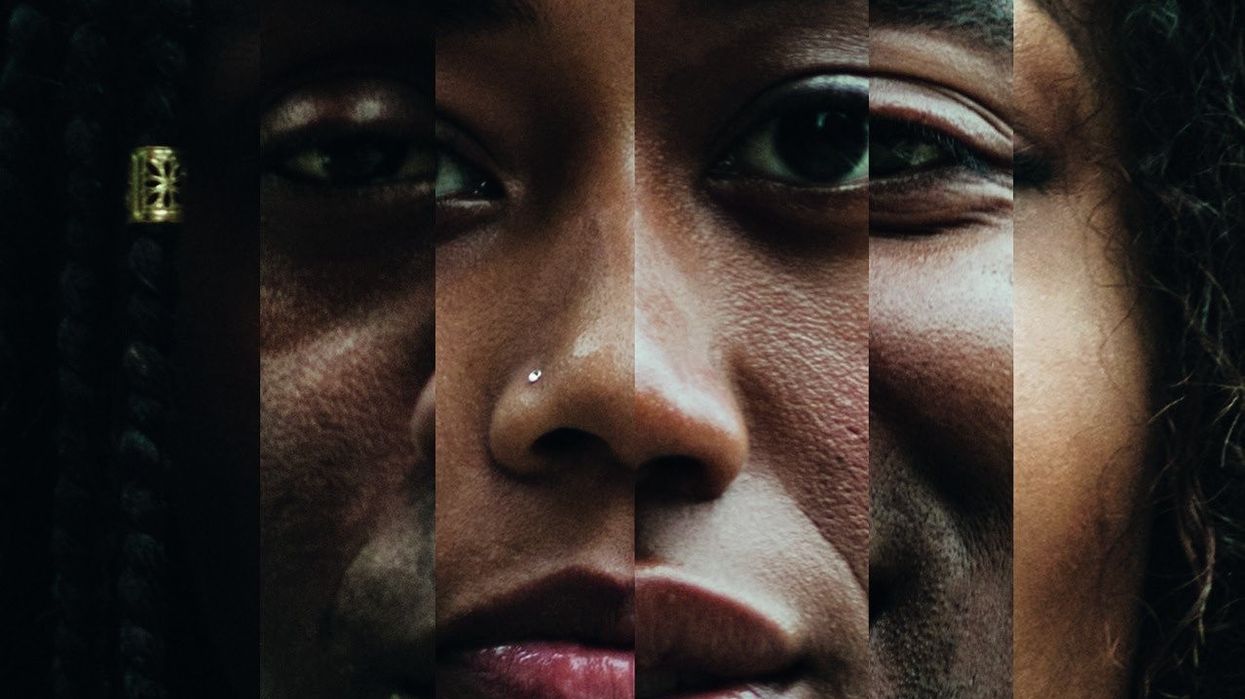

SPY technology, which collects people’s facial and personal data, is unfairly targeting ethnic

minorities and needs to be investigated, according to campaigners.

More police forces and private firms are using automated CCTV cameras to collect people’s biometric information at shopping centres, festivals, sports events and protests.

Privacy campaigners have criticised the technology, alleging it is prone to racial bias and that it has led to blunders. And unlike undercover snooping, which requires approval from a senior officer or judge, “overt” spy systems are not covered by any laws.

Activists say trust will continue to erode between BAME communities and the police if the Big Brother-style Automated Facial Recognition is not probed.

Saqib Deshmukh, from Voice4Change England campaign group, told Eastern Eye: “I’m very concerned about the use of facial recognition technology and the issue of greater surveillance in society.

“There is no consent or accountability about Automated Facial Recognition and its use; it’s hugely invasive.

“This is mass surveillance on a grand scale and it’s worrying how this technology meshes with existing discrimination to lead to further injustices.

“We know that it is already being used in our communities despite concerns being raised last year at Notting Hill carnival and in Cardiff, where it was found to be faulty.

“It can also put people in Asian and African Caribbean communities off from going to protests and even just going out in their day to day lives.”

Deskhmukh said in 2016 during an English Defence League march in High Wycombe, Buckinghamshire, he and others challenged the filming of Muslim protesters by police and “the use of Prevent officers to survey who attended”.

“In this context I fully support the call from The Network for Police Monitoring (Netpol) demanding protection from routine surveillance of those who are protesting or campaigning. There needs to proper oversight and accountability for its use,” he added.

The Metropolitan and South Wales police forces have run trials of live facial recognition systems, which use mobile video cameras hooked up to facial recognition software to scan crowds for faces on a watchlist.

The trials were conducted at football and rugby matches, music festivals and on city streets. And at least half of all fashion retailers also use facial recognition software.

A Cardiff University review of the South Wales police trials found the system flagged up 2,900 possible suspects – but 2,755 were false matches.

Jo Sidhu QC, a leading criminal and human rights barrister, told Eastern Eye: “The public have become used to the deployment of CCTV cameras in streets and public buildings up and down the country, most people have no objection because they’re doing nothing wrong and it helps to deter criminals.

“But facial recognition cameras and the data they collect allow the police and security services to monitor, target and track perfectly innocent people who may be engaged in lawful protest or simply going about their daily lives.

“As a barrister who has worked to protect human rights and liberties, I share the concern that such intrusive surveillance must not be permitted without proper public debate and parliamentary scrutiny.

“Too much personal information is already collected about us by the government, Facebook and Google.

“Minority communities would rightly feel that a surveillance state would target them disproportionately in the same way that stop and search powers are used against them currently.”

Tony Porter, the surveillance camera commissioner, told a conference in June it was unacceptable no law had been unveiled to control how intrusive technologies were used.

He revealed he had intervened to stop several police forces from using the technology “disproportionately”.

In one case Greater Manchester police partnered with the city’s Trafford shopping centre to

monitor facial data on millions of shoppers. In a trial last year, police crosschecked data collected by the centre against a small watchlist of shoplifters and missing persons.

Over six months only one positive result was achieved, on a man wanted on recall to prison, and the partnership between the force and the mall will not continue.

Weyman Bennett, from the Unite Against Fascism group, said: “The problem is unrecognised institutional racism in the police, identified by the Macpherson report [in 1999], hasn’t been eradicated.

“The danger with the technology is it sees people as enemies rather than citizens, collecting data on them.

“And the refusal of police to acknowledge bigotry and Islamophobia means there is a danger that it could be forcefully exploited to undermine democracy.”

He added: “MI5 and security services have said domestic terrorism among white supremacists is increasing; there is little to suggest this technology is being used to target those people as it is based on stereotypes of criminal behaviour.

“Unlicensed police overwatch of people is a danger, it is stop and search applied digitally

– if there is to be trust there has to be transparency of what the data is used for.”

A Home Office spokesperson said: “We support police use of biometrics and other new technologies to help protect the public and bring criminals to justice, but it is essential

that they do so to high standards and maintain public trust.

“We welcome the biometrics commissioner’s annual report on the work he has done

over the last year to promote compliance with the rules on the use of fingerprints

and DNA, which we have published in full.”