STAFF SERGEANT SOHAIL ASHRAF of the British Army has called on the Asian community to consider donating blood.

According to him, the donation could “save someone’s life in their hour of need”.

The most recent data from the NHS Blood and Transplant (NHSBT) shows that fewer than five per cent of donors who gave blood in the last year were from black, Asian and minority ethnic communities. This is despite BAME communities comprising around 14 per cent of the UK population.

Ashraf noted the impact of the Covid-19 pandemic on the NHS, but said he believed that the emphasis on the virus may lead to other illnesses being overlooked. The need for blood was still vital, he added.

“People may forget about all the other illnesses that are out there,” he told Eastern Eye. “But the NHS needs that blood, it’s really important.”

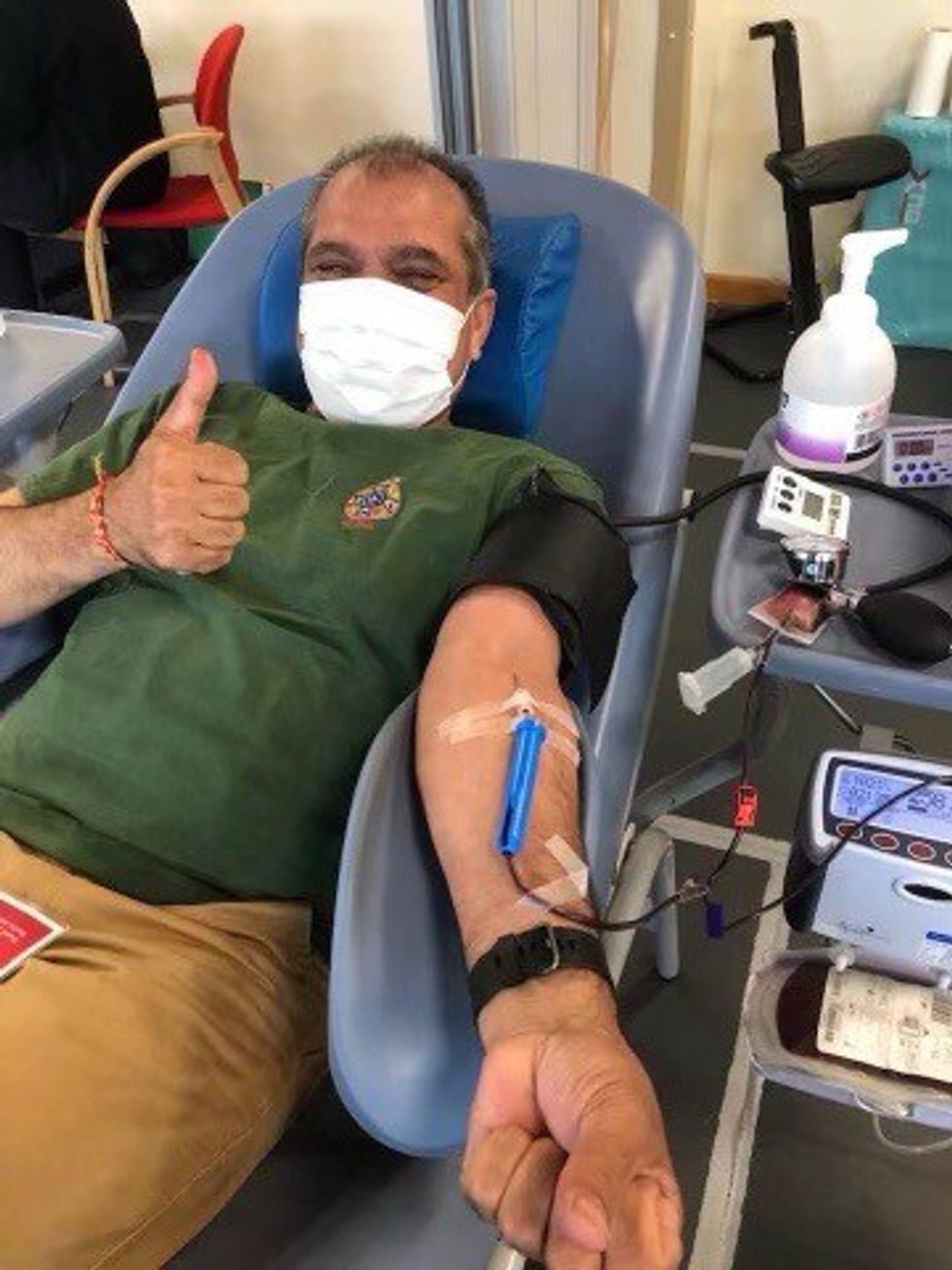

Acharya Krishan Attri is the British Army’s Hindu chaplain. He is also an advocate

for blood donation and is a regular donor. Whenever he donates blood, he

makes sure to post information on his social media pages to encourage others.

“I put them on social media to show people there is nothing wrong with donating

blood and there is a great need for it, especially for those from an ethnic background,” Attri told Eastern Eye.

Ashraf said he first became aware of blood donation when his father died more than 12 years ago. While visiting him in hospital, Ashraf spoke to a nurse who told him about blood donation.

“It brought it home to me when I was speaking to the nurse about the lack of blood donorship from the Asian community,” said Ashraf, who was awarded an MBE in 2018 for his years of continuous work within the BAME community. “I did a bit of digging and found out how it all worked. I decided I wanted to do it because I could save someone’s life.”

He has regularly donated blood ever since. However, he believes the lack of Asian blood donors is down to several misconceptions. For instance, some may be wary of donating blood in case it goes against their religion.

“There may be perceptions within the Muslim community, for example, and they may think they shouldn’t be giving blood,” Ashraf explained. “But the holy books all say, if you can save someone’s life, that’s a good thing.”

There may also be a fear around the procedure itself, he said. “Having a needle inserted and then having a big bag right next to you which starts to fill up with blood… there is definitely a fear factor around it,” he said. “But I think people need to get down into the weeds of knowing how it all works. Giving that amount of blood every six months or so will not make a blind bit of difference [to their health].”

Attri agreed that some people may feel apprehensive about the process. “I’ve seen people feeling scared because they have never done it before, but we have doctors who support them and give them encouragement that there is nothing wrong,” he said. “They just need some encouragement from the community leaders and experienced people.”

Ashraf also believes there is a lack of media attention on the issue. “There needs to be targeted media campaigns to explain the reasons why we need to give blood,” he said.

Attri said services were “desperate” for donations from minority communities. “There is a shortage of blood, especially for people from ethnic backgrounds,” he explained. “People should definitely come forward, to help and support us.”

For more information, see www.blood.co.uk/