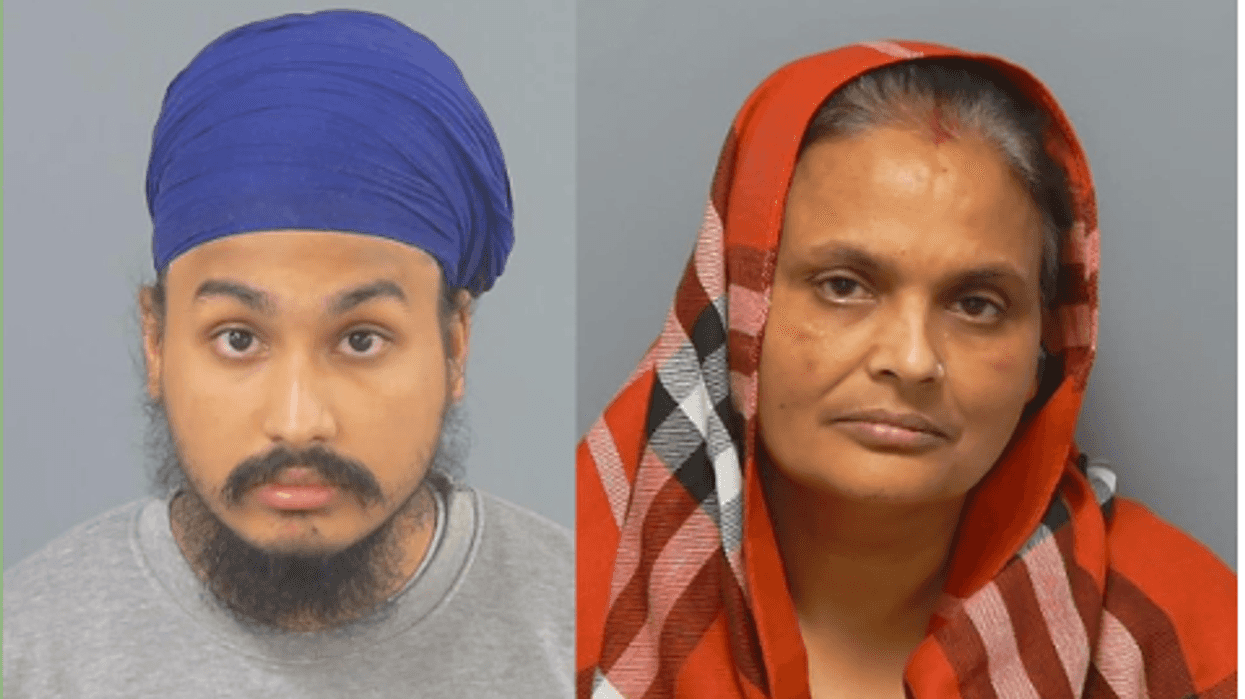

TALENTED sikh boxer Tal Singh has got Amir Khan working with him as the 26-year-old from Liverpool is expected to make his professional debut this summer.

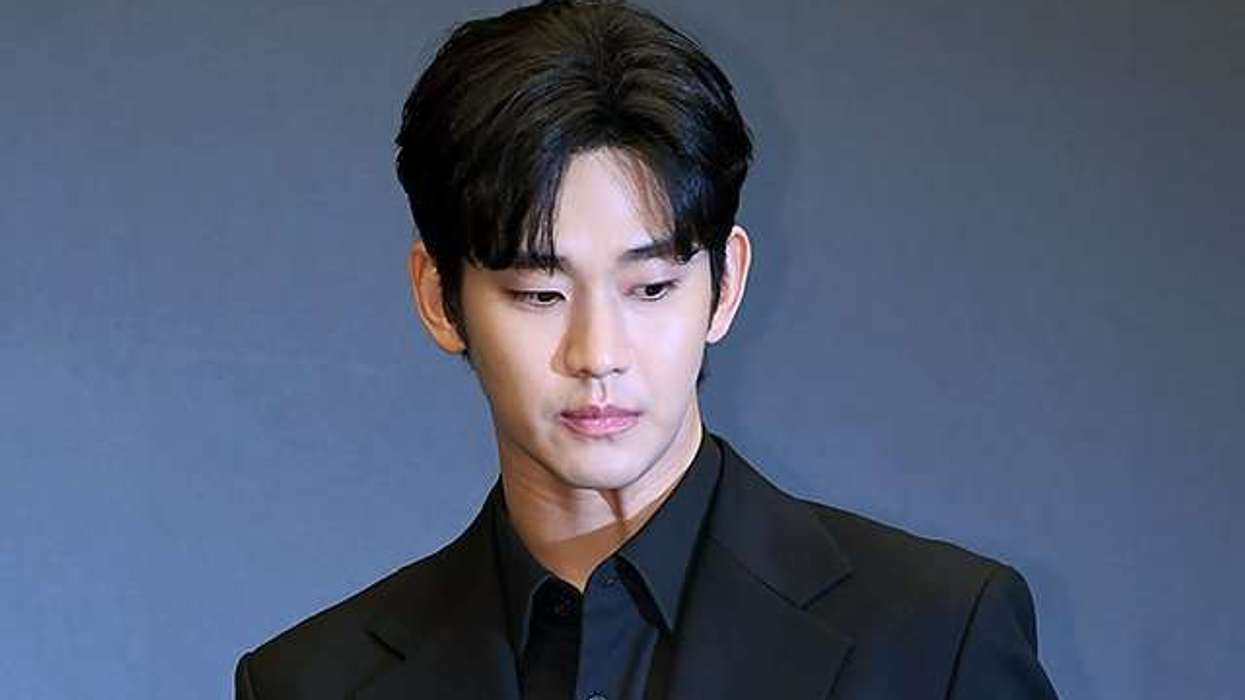

The two-time world champion Khan is putting all his energy to see Singh become the first-ever sikh world champion in boxing.

“I’m so happy to be working with Tal and I will be putting all my energy into taking him to the top and becoming the first ever Sikh world champion boxer," Khan said.

"He’s breaking down boundaries, just like I did when I became the first ever British-Pakistani world champion to open the doors for the Muslim community in boxing. I see in him the dedication, mentality and focus to become a world champion. I know what it takes to be a world champion and I see that in him," Khan added.

Light-flyweight Singh began his amateur career at 18, tad late for a boxer. But with his natural talent he quickly progressed through the ranks in just 14 fights.

He’s already made history as the first sikh to ever win the England Boxing National Championship in 2018 and incredibly defended the title the following year.

His potential as a boxer also caught the eye of former world heavyweight champion David Haye and trainer Ruben Tabares, when he trained at the Hayemaker gym alongside Haye for many years.

Not only does the boxing world highly rate him, but he too has a big celebrity following, which includes magician Dynamo, rapper Tinie Tempah, WWE champion Jinder Mahal and singer Jay Sean to name a few. Singh believes his success in the boxing ring will inspire other boxers from his community to pick up the sport.

“I’m really excited for what the future holds, having Amir behind me and creating something special legacy wise for the both of us being in one team! I've been working really hard for many months alongside Amir in Bolton.

"I know there is a lot of pressure on me being Amir’s first and only fighter that he’s looking after, but I’m confident I will deliver on everything he believes and sees in me. I really do think we can do something special together and we're thrilled to share this news with the world," Singh said.