ENGLAND'S limited-overs captain Eoin Morgan wants the cricket board to tackle the Yorkshire racism row "head on", insisting there was no place for any kind of discrimination against any player.

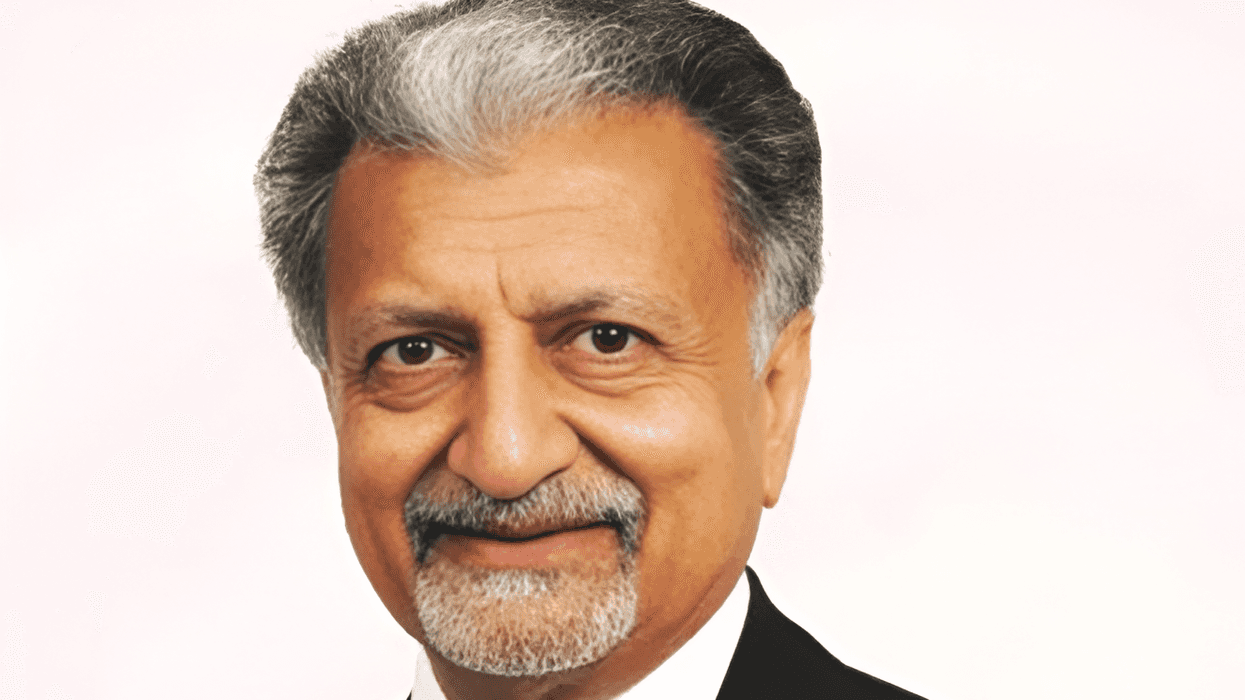

The England and Wales Cricket Board (ECB) has been rocked by former player Azeem Rafiq's allegation of racism at Yorkshire County Cricket Club, which has been suspended from hosting international or major matches.

Club chairman Roger Hutton has resigned over their handling of allegations made last year by former England under-19 captain Rafiq who is of Pakistani descent.

"I think if they're matters of an extreme or serious nature like these are, they need to be met head on, and for us as a team, that's exactly what we want to see," Morgan told a news conference ahead of Saturday's (6) Twenty20 World Cup contest against South Africa.

"We firmly believe that there is no place in our sport for any type of discrimination.

"I think the actions of ECB board to Yorkshire have indicated how serious they are about dealing with issues like this ... obviously those actions speak louder than words."

Yorkshire batsman Gary Ballance, who said he had used racist language towards his former teammate Rafiq, has been indefinitely suspended from England selection.

Asked for his view, Morgan said: "I think the decision that was taken at the start of last summer in a similar instance with Ollie Robinson is consistent with the board's decision with Gary Ballance."

Fast bowler Robinson was suspended from international cricket in June for historical racist and sexist tweets.

Morgan said his team, who often take a knee before matches in an anti-racism gesture, were trying to usher in a change.

"For probably the last two to three years, our culture has been built around inclusivity and diversity. It's actually been quite a strong part of our game," the 35-year-old said.

"For that period of time in particular, we've been active about talking and actioning things that show meaningful change."

"It's not perfect, but we're making good ground towards change that we want implemented."

(Reuters)