- Sextortion reports from under-18s rose 34 per cent last year

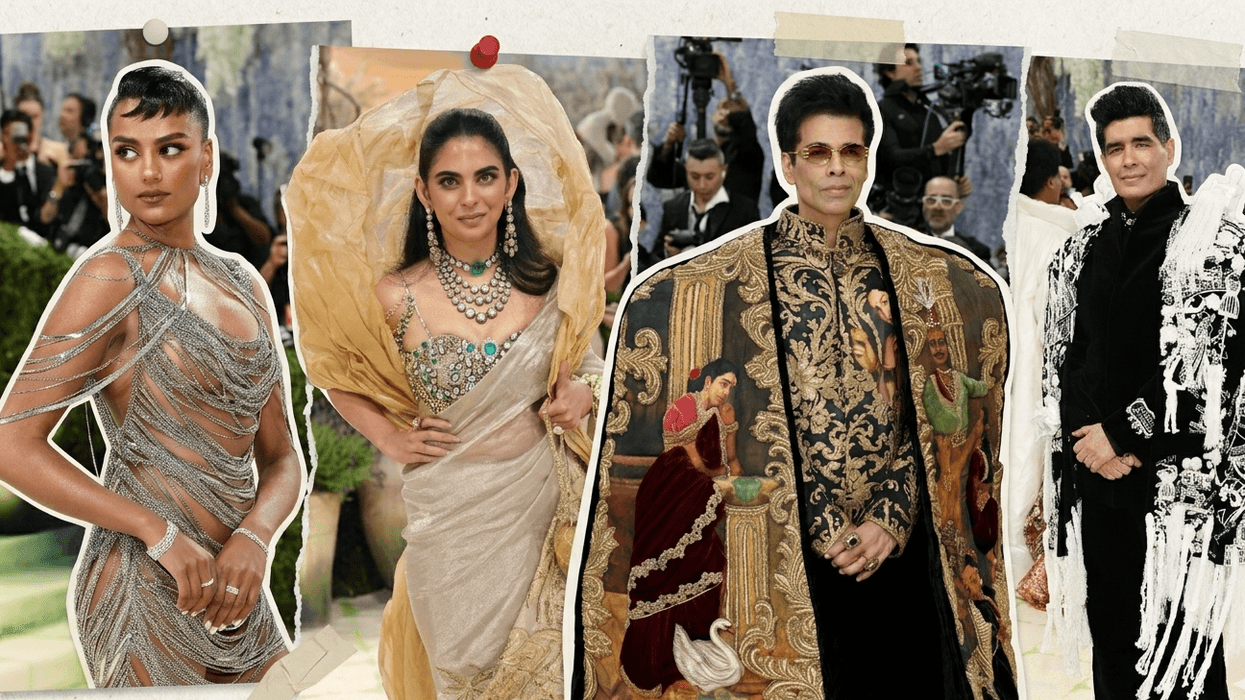

- Schools are being advised to use blurred, distant or rear-facing photos — or none at all

- One private school group has already redesigned its website to remove recognisable pupil images

SCHOOLS across the UK are being urged to remove pictures of pupils from their websites and social media pages after criminals used artificial intelligence to turn children's photos into sexually explicit images and demand money.

Child safety experts and the National Crime Agency have warned that blackmailers are taking images from school websites, using AI tools to manipulate them into illegal material, and then threatening to release them unless they receive a payment, reported the Guardian.

The warning follows a recent case in which an unnamed British secondary school was targeted in exactly this way. The Internet Watch Foundation (IWF), which monitors child sexual abuse material online, said 150 of the images produced in the attack were illegal under UK law.

The watchdog converted the images into digital fingerprints and shared them with major technology platforms to prevent them being uploaded online.

The IWF said the school was not the only institution to have been targeted in this way, though the problem is not yet widespread. An advisory body on online harms has warned, however, that it is "only a matter of time" before more schools are attacked.

Guidance issued to schools recommends they replace close-up, face-on portraits with photos taken from a distance, images shot from behind pupils, or blurred pictures. Schools are also being told to think carefully about whether they need pupil photos at all. Labelling pictures with a child's full name increases the risk, the guidance adds, as does leaving school social media accounts visible to the general public.

'Contact police immediately'

Schools that are targeted have been advised to contact police immediately, keep any criminal images as evidence and remove the original photos that were used.

Jess Phillips, the minister for safeguarding and violence against women and girls, said the attempted blackmailing of schools was a “deeply worrying emerging threat” and laws on use of AI to create explicit images would be updated if necessary, having announced a ban on possessing AI models designed to generate CSAM.

“We will not hesitate to go further if necessary and make sure our laws stay up to date with the latest threats,” she was quoted as saying.

Leora Cruddas, chief executive of the Confederation of School Trusts, whose academies educate more than four million children in England, said schools would try to find the right balance between recognising pupils' achievements and keeping them safe.

"It is deeply depressing that in doing so we potentially have to contend with threats from abusers and scammers," she said.

According to reports, the crime of blackmailing people over intimate images, known as sextortion, has grown sharply in recent years, accelerated by advances in AI. It has been linked to the deaths of several British teenagers.

The Report Remove service, which helps children flag explicit images of themselves online, received 394 reports of blackmail attempts from under-18s last year, a rise of 34 per cent on the previous year.

The NCA has linked sextortion gangs to west Africa and Nigeria. It is understood the school attack involved language from scripts commonly used by such gangs.

Some schools, meanwhile, have already acted. The Loughborough Schools Foundation, which runs three private schools, redesigned its website last year to remove recognisable images of pupils.